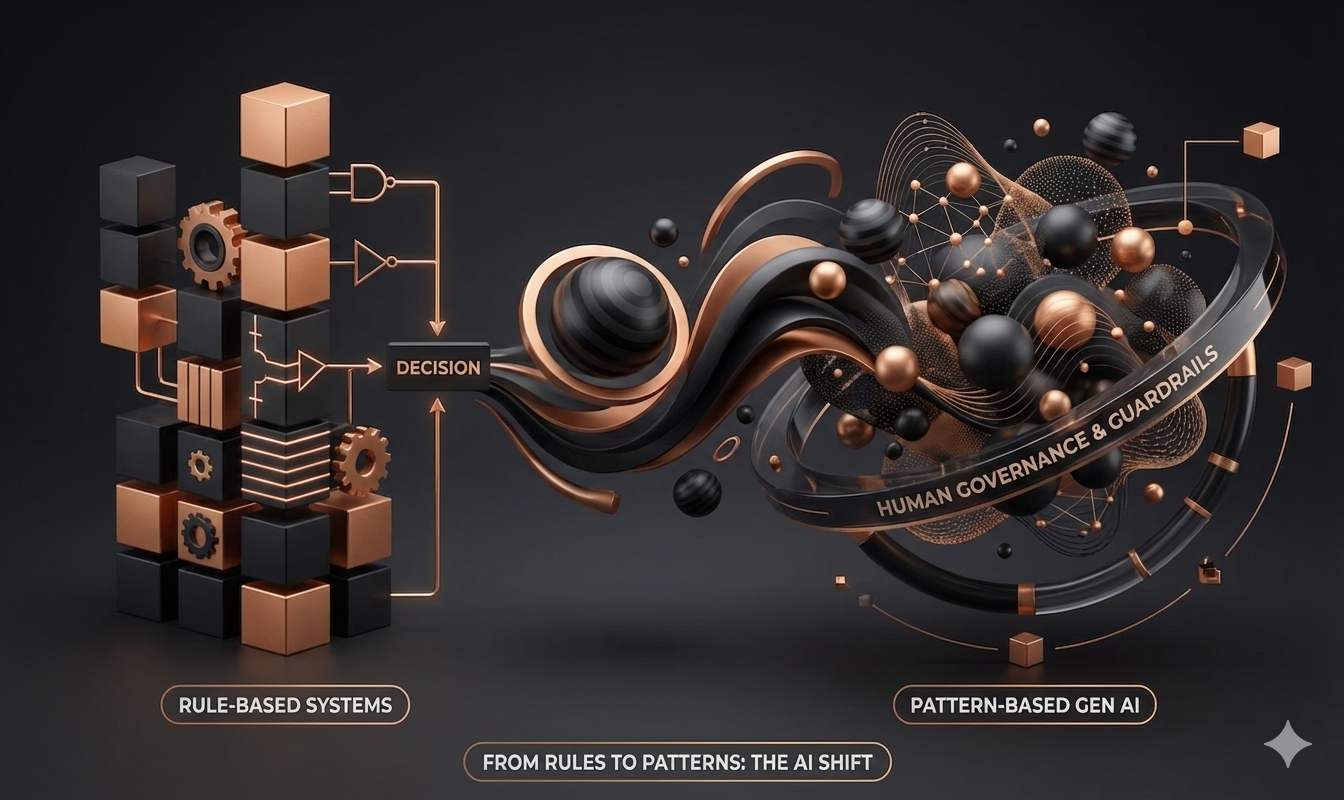

From Rules to Patterns

Why today’s AI can be powerful and unpredictable, and what leaders can control around it.

An audit finding you’ve probably seen coming

In a recent Gen AI pilot, internal audit flags something uncomfortable: two teams asked the same question, got different answers, and nobody could clearly explain why.

That’s not a “bug” in the usual software sense. It’s a clue that your organization is using a different kind of machine intelligence, one that behaves more like a pattern generator than a rule book.

Two traditions that fail differently under pressure

Older enterprise “AI” was often symbolic: business rules, decision tables, logic trees. The knowledge lived in rules people wrote down. That made it transparent, repeatable, and audit-friendly, exactly why governance teams trust it.

Generative AI sits in the statistical tradition: it learns patterns from huge amounts of data. Instead of “follow these rules,” it’s closer to “learn from many examples, then predict what fits.”

This shift matters because your governance questions change from “did we code the rule correctly?” to “what did it learn, and how does it behave across cases?”

Why it sounds confident even when it’s wrong

A large language model generates text by predicting the next likely word (or token) based on the context you give it. Do that repeatedly and you get fluent output that can summarize, draft, compare options, and explain concepts. But it’s probabilistic, not deterministic.

For the same prompt, there may be several plausible “next steps,” not one fixed answer. Settings like “temperature” can make outputs more consistent or more exploratory.

This also explains hallucinations: the model is optimizing for what looks plausible, not what is true. In a governance context, that means Gen AI is never an oracle. It’s an assistant that must be wrapped in verification.

Your advantage is knowing where rules still own the decision

In regulated and reputation-sensitive environments (financial services, healthcare, critical infrastructure, even large procurement), you still need determinism in the places regulators and customers expect it: eligibility checks, threshold rules, escalation criteria, approvals.

Where Gen AI earns its keep is earlier in the workflow, where humans already interpret messy inputs: long documents, emails, complaints, meeting notes, bilingual materials, ambiguous requirements. It can extract, summarize, and propose drafts and then your rules engine, controls, and people make the final call.

In Malaysian workplaces, you’re often also managing hierarchy and accountability. So the practical leadership move is to make the “mode” explicit:

– Pattern mode (assist, draft, explore options)

– Rule mode (decide, comply, enforce constraints)

Control the system around the model

You usually can’t hard-code every ethical outcome into the model. What you can do is engineer control layers:

- Data governance (what it can learn from / see)

- Guardrails and filters (what it must not output)

- Retrieval and citations (ground it in approved sources)

- Workflow gates (when it’s allowed to act)

- Monitoring + sampling (spot drift, bias, recurring errors)

- Human-in-the-loop escalation (low confidence → review)

Start with low-stakes use cases where variation is acceptable (drafting, summarising, brainstorming). Build a small test set of real examples, measure error patterns, and only then expand.

- Which parts must be deterministic, and why?

- What guardrails, verification, and escalation exist around the model?

- How will we monitor behaviour over time, not just at go-live

You don’t lose control with Gen AI, you relocate control from “writing rules” to “governing patterns and the system around them.”

GenAI Governance Sandbox: a 3 minute simulator

Use this tool to experience what the article explains:

- Gen AI can vary for the same question because it predicts patterns, not rules.

- It can sound confident even when it is unverified.

- Leaders regain control by adding verification and control layers around the model.

How to use it:

- Try Variation, move the Exploration slider.

- Use Verification to check against approved facts.

- Turn on Control Layers to see outputs become safer and more consistent.

Why this helps learning stick: It creates a fast feedback loop that updates your expectations of how Gen AI behaves, so you can adopt it with clearer boundaries and less fear.

- Pattern mode produces plausible text, not guaranteed truth.

- The same question can yield different answers under variation.

- Audit questions shift: from “did we code the rule”, to “how does it behave across cases”.

- The assistant can sound confident while still being unverified.

- Verification is a system responsibility: retrieval, citations, and review gates.

- Determinism still owns approvals, thresholds, eligibility, escalation.

0 Comments