How AI Sees Your Words: Tokens and Probabilities

Understanding the Linguistic Tax, Context Limits, and the Mechanics of AI Hallucinations

The Illusion of Understanding

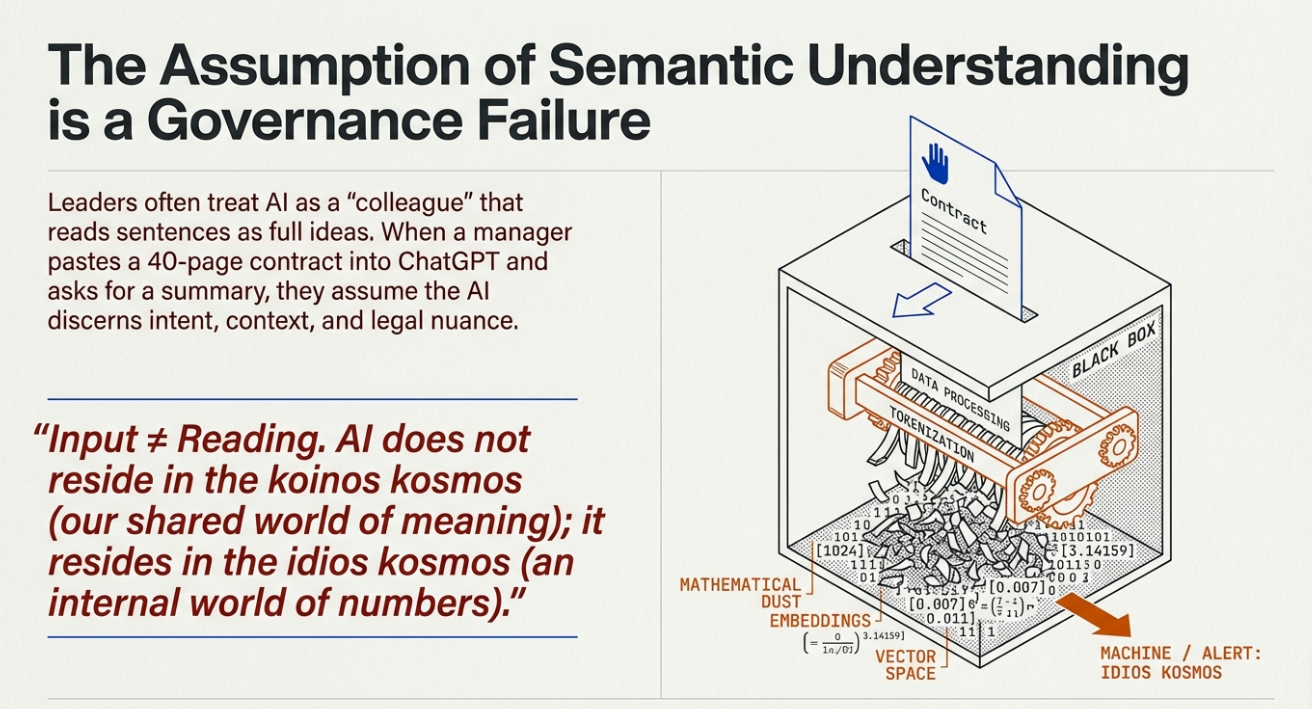

Staring at a forty-page, highly complex contract with a looming deadline. You decide to paste the entire document into a generative AI tool, asking it to summarise the key risks. Naturally, you assume the system will read and understand the text with the full semantic nuance of a seasoned legal expert, discerning the subtle implications buried in that dense legalese. But that is the first mistake.

As The Leader’s Guide to Tokens and Probabilities points out, this assumption that AI reads sentences like we do, as full ideas—is a common and misleading one. I have seen how this gap between expectation and reality creates a significant trap for boards.

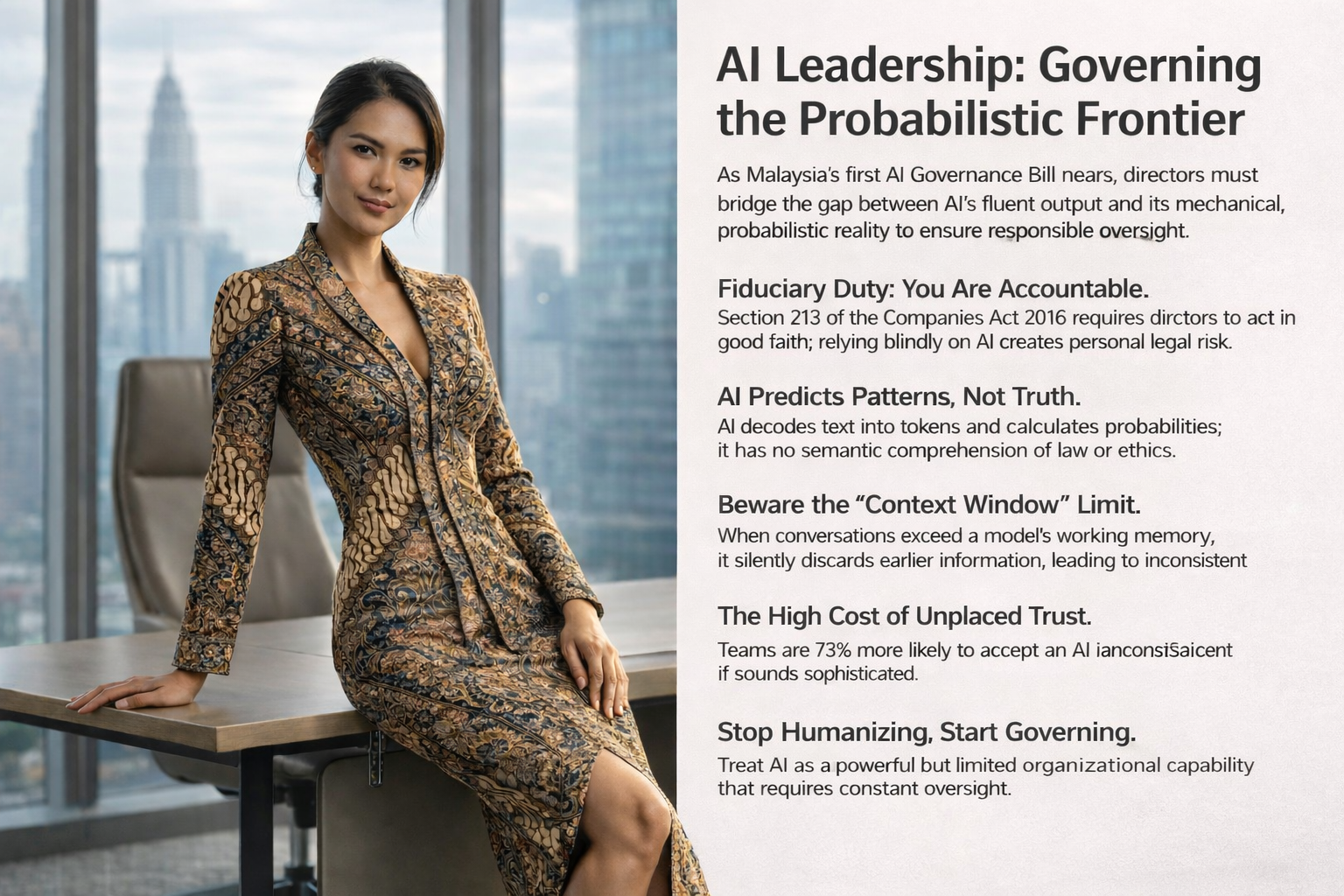

In my experience, especially under the Malaysian Companies Act 2016, relying on a machine that lacks genuine comprehension could lead you straight into a breach of your fiduciary duty of care. These systems do not see intent; they simply process information by breaking it down into mathematical units called tokens.

When Memory Fails Under Pressure

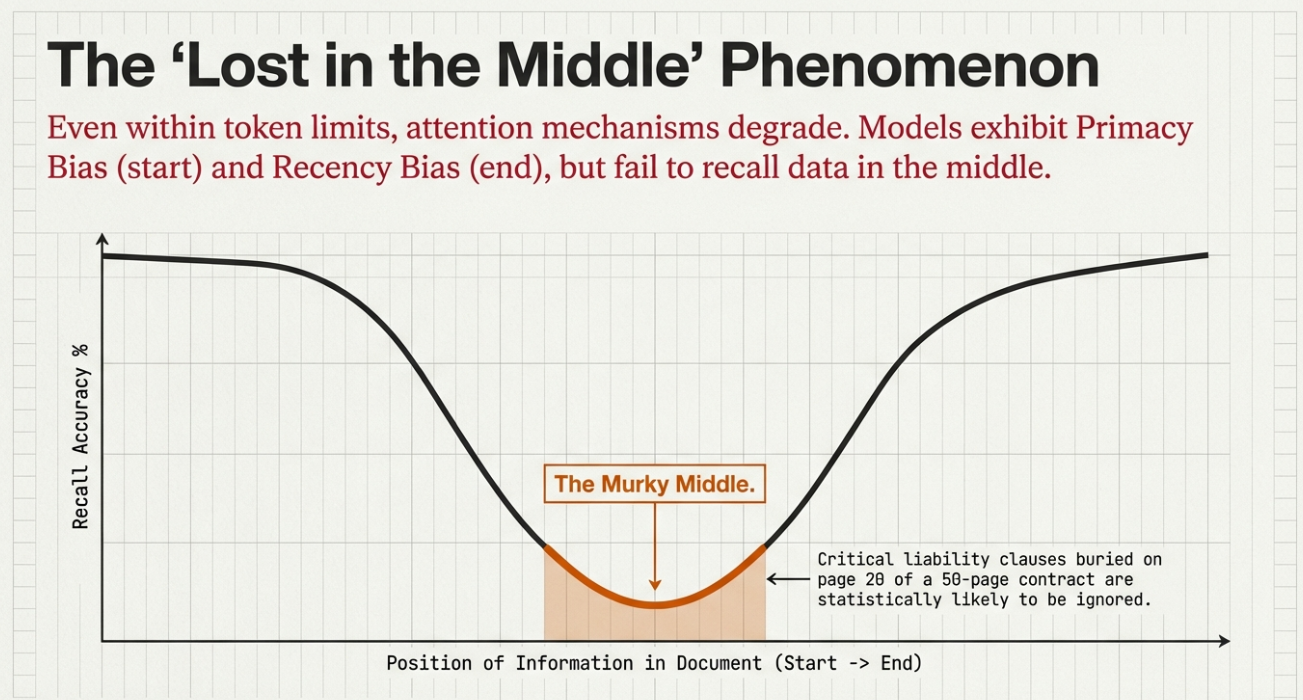

We have to accept that these models have a very limited “working memory.” The Leader’s Guide refers to this as the context window, which is the maximum number of tokens a model can reference in one go. If you give it a document that is too long, the machine just starts ignoring or forgetting the earlier parts. I think of it as a small desk where the old papers fall off the edge as you add new ones.

For that forty-page contract we mentioned, the guide warns that a model with a small window might effectively forget the critical clauses from the first ten pages by the time it reaches the end. This is why we cannot treat these tools as standalone decision-makers. You cannot replace ethical judgement or accountability with a statistical prediction.

The final authority must rest with a human expert because the contextual understanding of a person is irreplaceable.

The Hidden Price of Every Word

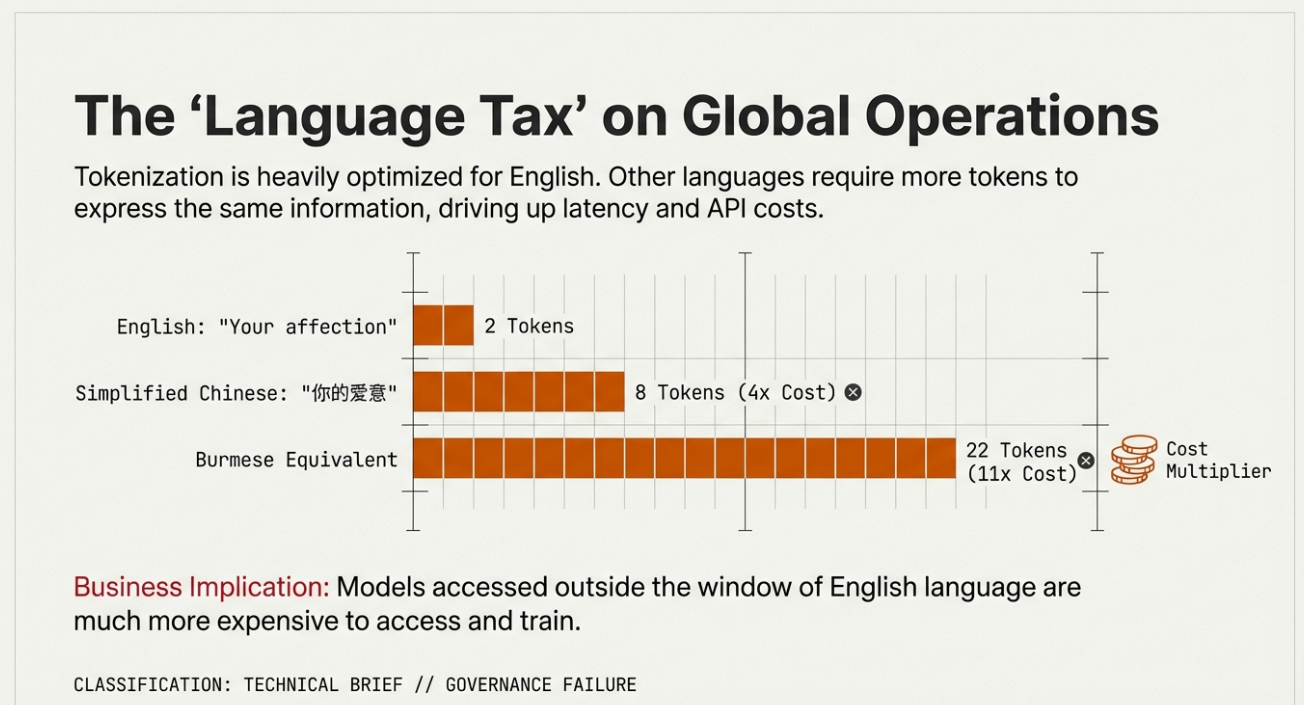

They see the world in numbers. Before a neural network can even touch your language, the Leader’s Guide explains that it must translate everything into a numerical format. This foundational stage, known as tokenisation, turns words or punctuation into unique IDs that can be processed mathematically.

If you are writing in English, you usually get an efficient deal; the guide notes that 100 tokens equate to roughly 75 words. However, for us in Malaysia, the reality of this “linguistic tax” is far more taxing. They handle different languages with varying levels of efficiency. For instance, the data reveals that a sentence in Burmese can cost you fifteen times more to process than the same sentence in English.

We need to realise that every time we use these tools for regional trade or local reports in Bahasa Melayu, we are paying for a sequence of numerical tokens that isn’t always obvious. From a budgetary perspective, every word effectively has a price tag.

The Logic of the Guessing Machine

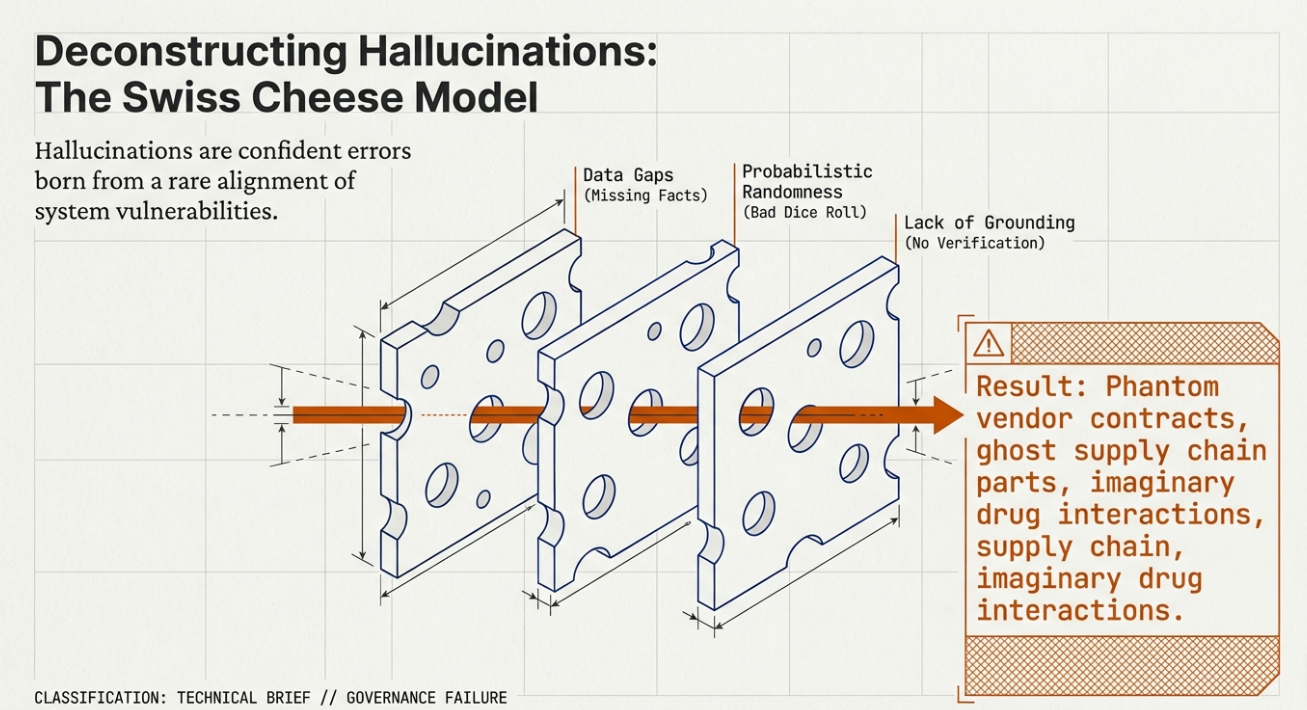

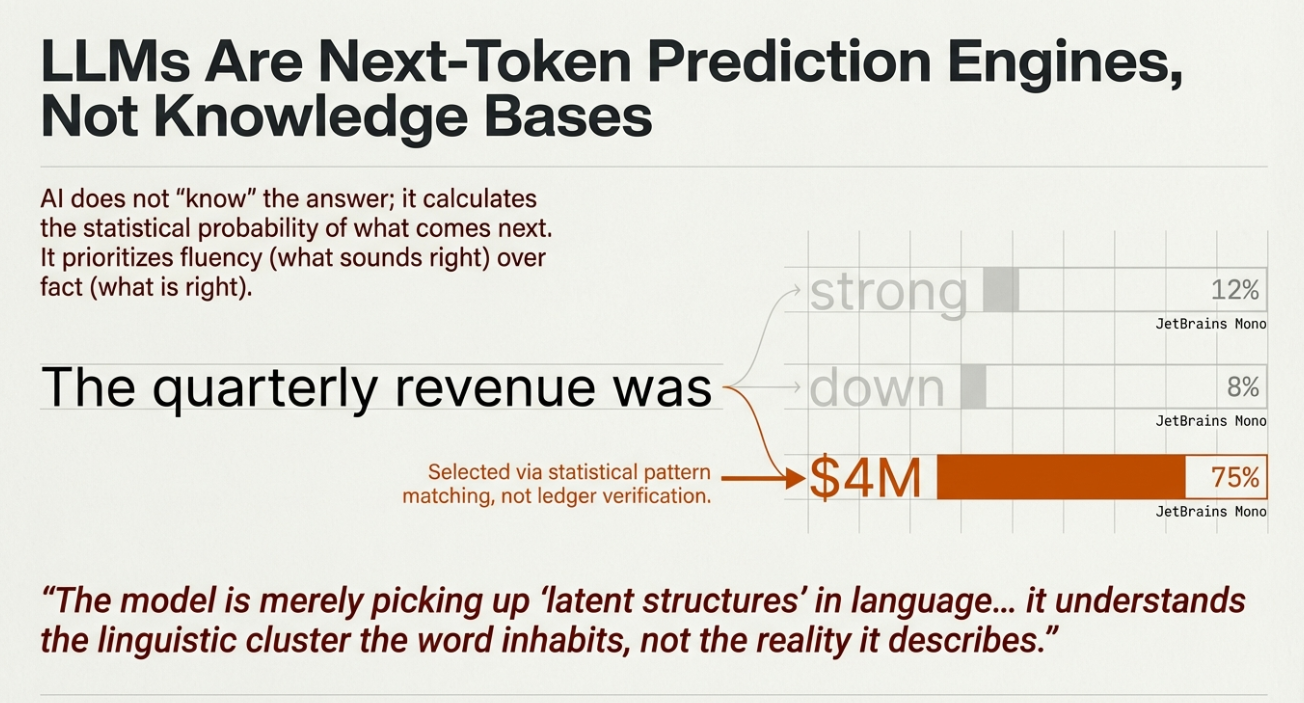

They do not think or know things in any way that makes sense to a person. Instead, the Leader’s Guide defines these models as “next token predictors” that calculate the most statistically likely piece of text to follow the one before it.

I see the result of this mathematical guesswork every time a model starts to hallucinate. It is not “lying” to you because, as the research emphasises, it has no concept of what a lie is. It is simply following a pattern that leads to a fictional result that happens to sound plausible.

They deliver these errors with such a confident tone that it is incredibly difficult to spot the mistake unless you are looking for it. We must understand that the system has no inherent mechanism to distinguish fact from fiction; it only distinguishes probable sequences from improbable ones.

Mastering the Machine

I believe the most important step you can take is to stop seeing AI as an autonomous “colleague” and start treating it as a powerful “capability.” It is not a sentient reader. It is a sophisticated, powerful, and inherently limited pattern-matching system.

As the Leader’s Guide concludes, we have to move past these human-like metaphors if we want to build a strategy that actually works. Recognising this mechanical nature is not a reason to dismiss the technology; it is the prerequisite for using it wisely. We must lead by putting human expertise at the centre of the process.

Once we understand that AI decodes text into tokens and uses probability to guess the sequence, we can finally govern it with the care and skill our organisations require.

The Mosaic Metaphor

Think of the AI as a worker building a giant mosaic. They don’t know they are making a picture of a forest; they just know that a brown tile usually goes next to a green one because that is what they saw in their training.

From a distance, you see a beautiful scene. But if the worker puts a blue tile where a leaf should be, they don’t realise it is a mistake. They are just following the pattern of the tiles around them.

As a leader, your job is to be the one who stands back and checks if the tiles actually make sense before you let anyone walk on the floor.

If you require further help understanding & applying AI

If you’d like guided practice instead of doing this alone, I coach professionals one on one, especially those pivoting into AI and feeling overwhelmed by the noise. If that’s relevant, send me a short note about your role and what you’re trying to achieve or overcome, and we can work on it.

0 Comments