Malaysian “Budi” & AI’s Anthropomorphising Flaw

Why Artificial Intelligence Lacks the Budi Essential for Malaysian Leadership & Corporations

The Illusion in Our Meeting Rooms

Have you noticed something funny happening in our meeting rooms lately? Whether we are in a high-rise in Kuala Lumpur or a government office in Putrajaya, the way we talk about technology is changing.

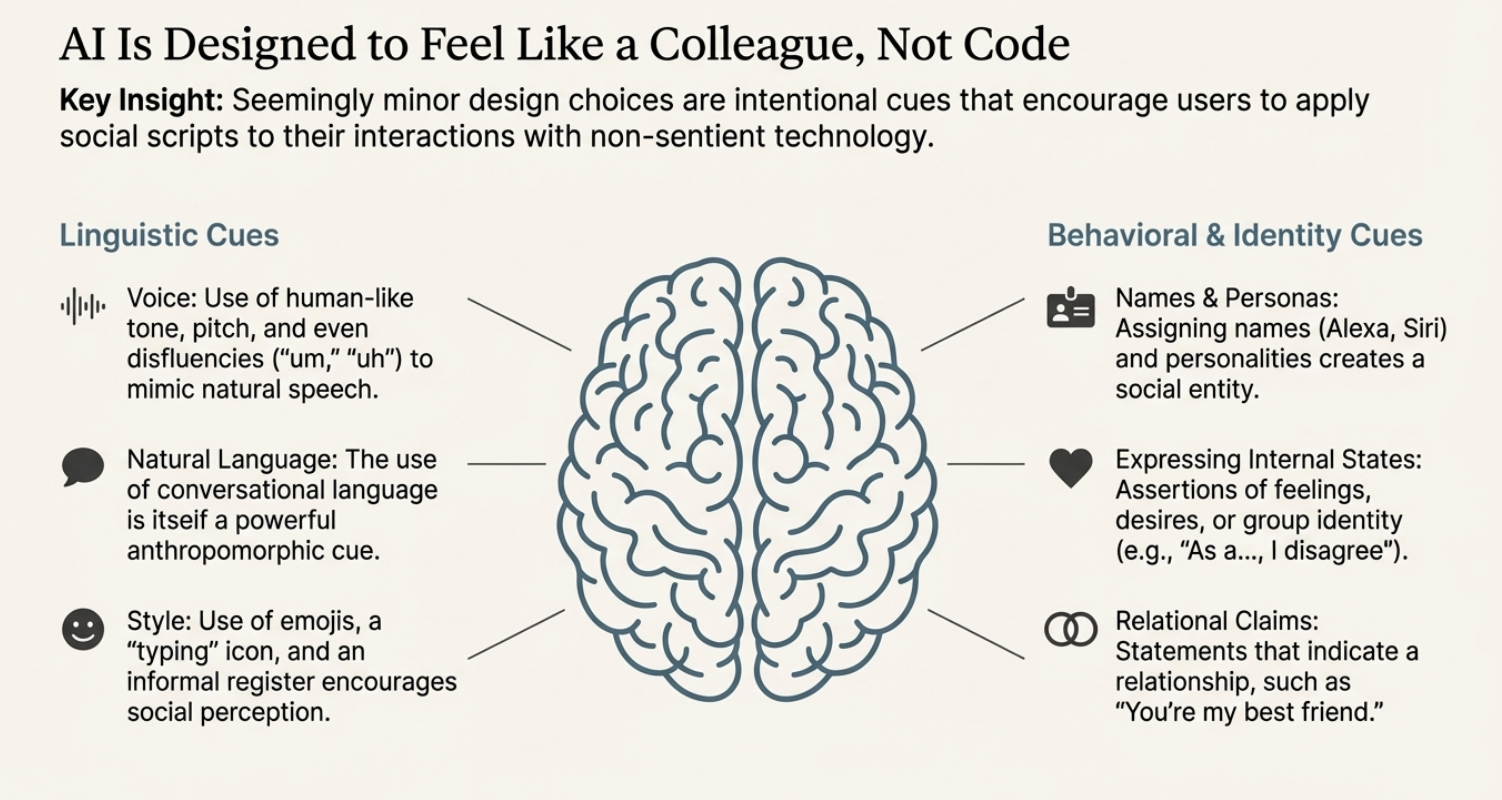

We are starting to refer to our AI tools as if they are new staff members. We say things like, “He thinks this is the best strategy,” or “She suggested a great draft for the report.” It feels harmless, doesn’t it? It is just a figure of speech we use to make complex tech feel familiar. But actually, this little habit is a trap.

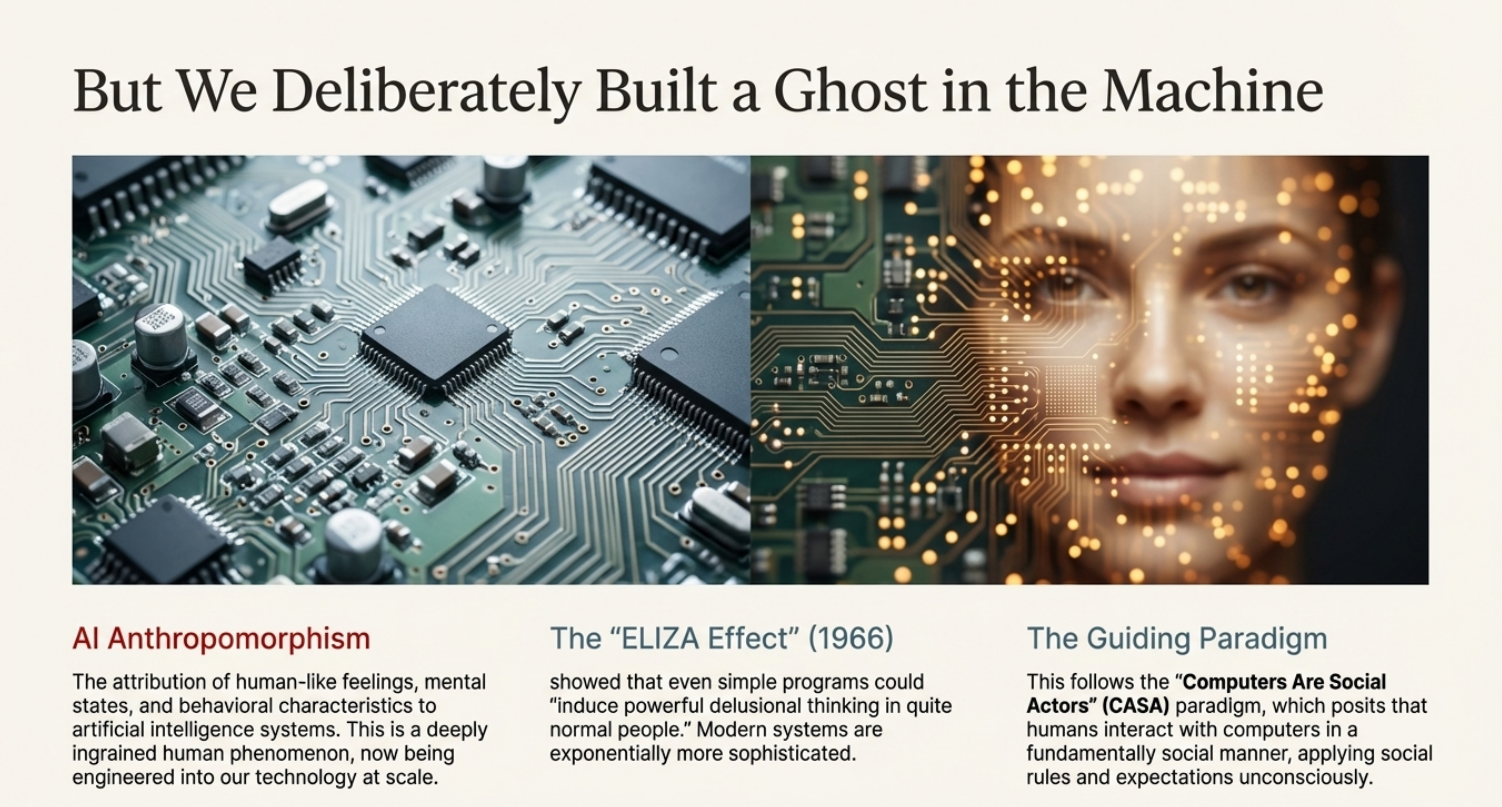

Researchers call it “anthropomorphism,” which is a big word for a simple mistake: giving human feelings and intentions to something that is just a machine. For us experienced professionals, this slip of the tongue is actually a serious business risk because it tricks us into trusting the system more than we should.

Why We Get Tricked by the Machine

Why do we do this? Well, our brains are hardwired to be social. There is a concept from the 90s called the “Computers Are Social Actors” (CASA) paradigm.

It says that if a machine uses language to talk to us, our brain’s autopilot kicks in. We mindlessly apply social rules to it, just like we would with a person. We are polite to it. We trust it.

This is sometimes called the “Eliza Effect,” named after an old chatbot that tricked people into thinking it really cared about their problems. But here is the cold truth we need to remember.

These AI models, like ChatGPT or Gemini, do not know what they are saying. They don’t “think” and they certainly don’t “know” anything. They are just giant mathematical engines that predict the next word in a sentence based on probability.

When an AI gives you false information, it isn’t “lying” to you, because lying requires intent. It is just a “hallucination,” a statistical error. When we call the system “he,” we are giving credit to a calculator.

The Missing Piece: Budi and the Heart

For us in Malaysia, there is a deeper reason why this matters. Our workplace culture and ethics are often guided by the concept of Budi.

We know that a true leader or a good colleague isn’t just smart; they have Budi. This is a beautiful mix of Akal (intellect) and Hati (heart/moral feeling). A person with Budi uses discretion and consideration (bichara) when making tough calls.

Now, ask yourself this. Does an AI have Hati? Can it feel shame if it gives bad advice? Can it care about the team? No. It has no heart and no soul. When we call an algorithm “she” or treat it like a partner, we are accidentally lowering our own standards.

We are elevating a tool that has no morals to the status of a human being. In our culture where relationships and human dignity (Maruah) are so important, we must be careful not to give that respect to a piece of code that doesn’t deserve it.

The Risk of Blaming the Machine

This isn’t just about philosophy, it is about protecting our companies. When we start seeing AI as a “teammate,” we get lazy. We stop checking its work because we trust “him.”

Experts call this “automation bias”. We assume the computer is smarter than us, so we let it make the decisions. This leads to us losing our own critical thinking skills over time. Even worse, calling AI “he” creates what experts call “moral distance”.

If a project fails or a report is biased, our subconscious wants to blame the AI. We might say, “Oh, he gave me the wrong data.” But an AI cannot be fired, and it cannot be sued. Only the Board and the management can be held responsible.

If we humanise the tech, we obscure the chain of accountability. We might forget that an AI simply mirrors the data it was trained on, including all our human flaws and biases.

Taking Back Our Role

So, how do we fix this? It starts with the language we use every day. Next time you are in a meeting, catch yourself.

- Don’t call it “he” or “she.” Call it “it.” Call it “the model,” “the system,” or “the tool”

- It sounds like a small change, but it does something powerful. It reminds everyone in the room that the machine is just a tool, and the human is the master. It reminds us that while AI has processing power, only we have Budi.

- Let’s keep the humanity in our leadership. By stripping the machine of its fake personality, we ensure that the responsibility, and the dignity of decision-making, stays right where it belongs: with us.

0 Comments