“If AI Can Do This, What’s Left of Me?”

The Silent Identity Crisis in Malaysian Leadership

Imagine you are reviewing a tender proposal. The English is flawless. The data analysis is pristine. It was submitted by a junior executive who joined the company only three months ago.

But something feels “off.”

In Malaysia, we often rely on firasat. It is that gut feeling you get when a deal looks too perfect, or a supplier’s numbers do not match their reputation. You ask the executive a simple question about the supplier’s background in Johor. They stare at you blankly. They cannot answer because the AI they used to write the report did not tell them.

This is the real friction point we are facing. It is not about technology. It is about the value of your experience. For decades, you climbed the corporate ladder because you were the “Sifu.” You knew the answers. You knew the shortcuts through the bureaucracy. You knew which handshake meant “yes” and which meant “maybe.” Now, a software subscription can generate the answers in seconds.

We need to have an honest conversation about this. The hesitation to adopt AI in many Malaysian SMEs and GLCs is often framed as a cost issue or a skills gap. But often, it is a psychological defence mechanism.

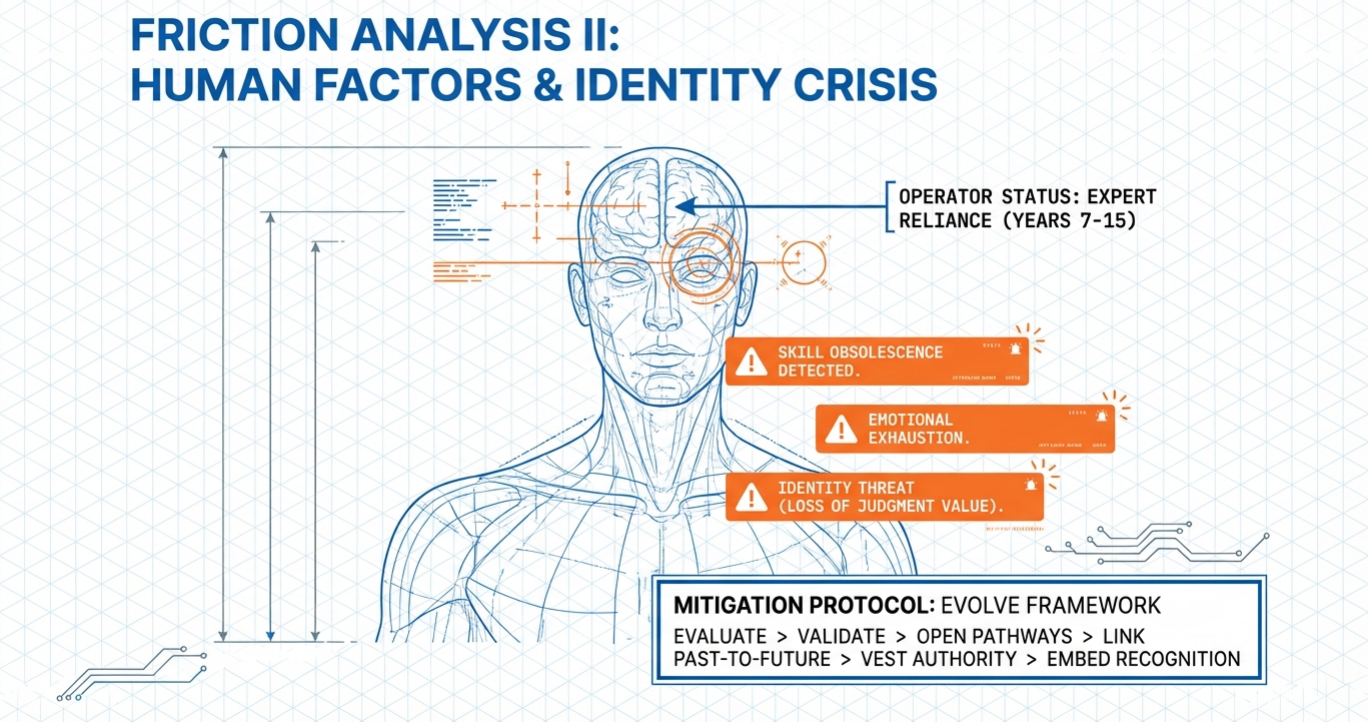

As Kevin Novak, a specialist in transformation psychology, states: “When someone’s sense of self is built on knowing how things work, systematic change to how things work creates an existential professional crisis.” Here is how we move past this crisis and find your new value.

The “Sifu” Trap: When Knowledge Becomes a Commodity

In our corporate culture, we place immense value on tenure. Respect is earned through years of service. We respect the Sifu because they hold the library of knowledge in their head.

For a long time, your value as a manager was defined by your memory. You knew the tax compliance codes for the manufacturing sector. You knew the formatting requirements for a government submission. You were the gatekeeper of information.

Generative AI has broken that gate.

A large language model can recall regulations faster than you. It can draft a compliance letter in seconds. It can summarise the Twelfth Malaysia Plan instantly.If your professional identity is built on “knowing facts,”

AI feels like a thief. It steals the monopoly on information that senior leaders once held. This creates a painful gap between your identity and your daily utility.

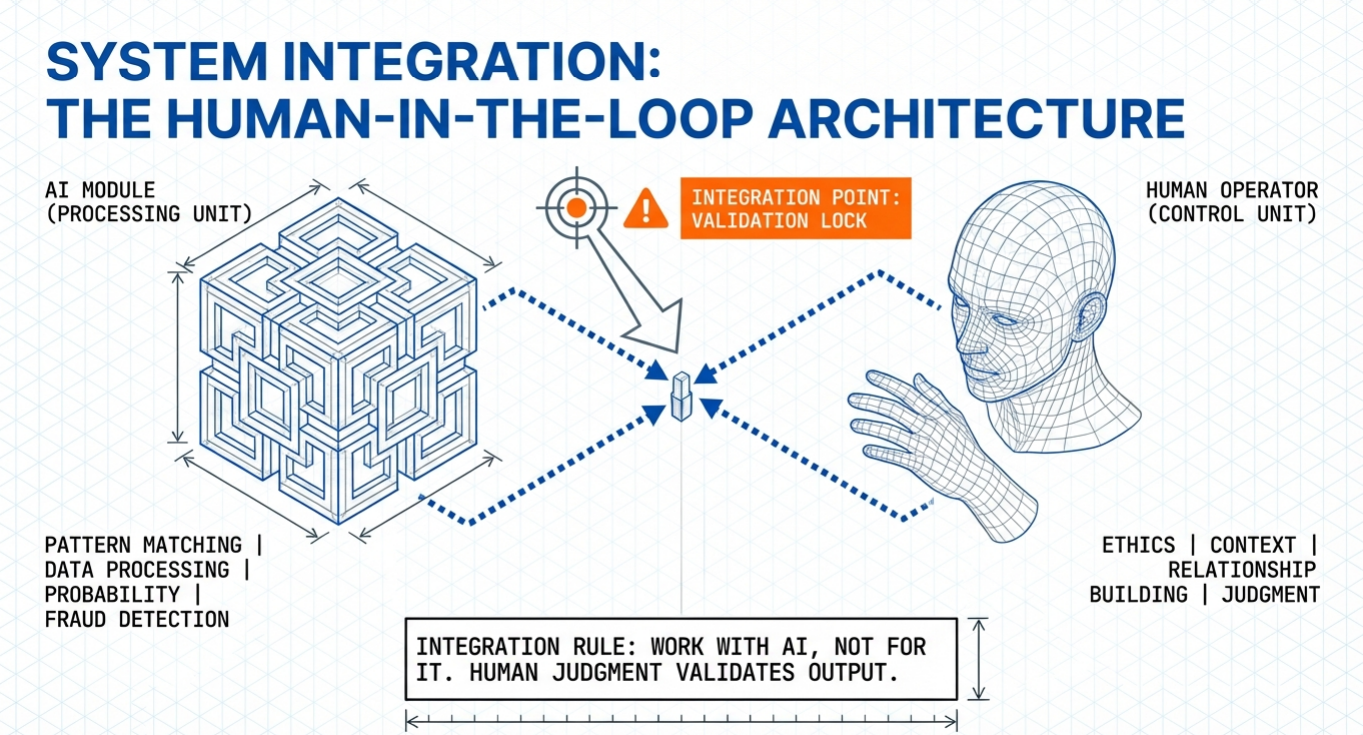

This is where many leaders freeze. They reject the tool to protect their status. But this defensive stance is dangerous. It prevents us from seeing the actual role we need to play. You are no longer needed to be the source of the data. You are needed to be the judge of its truth.

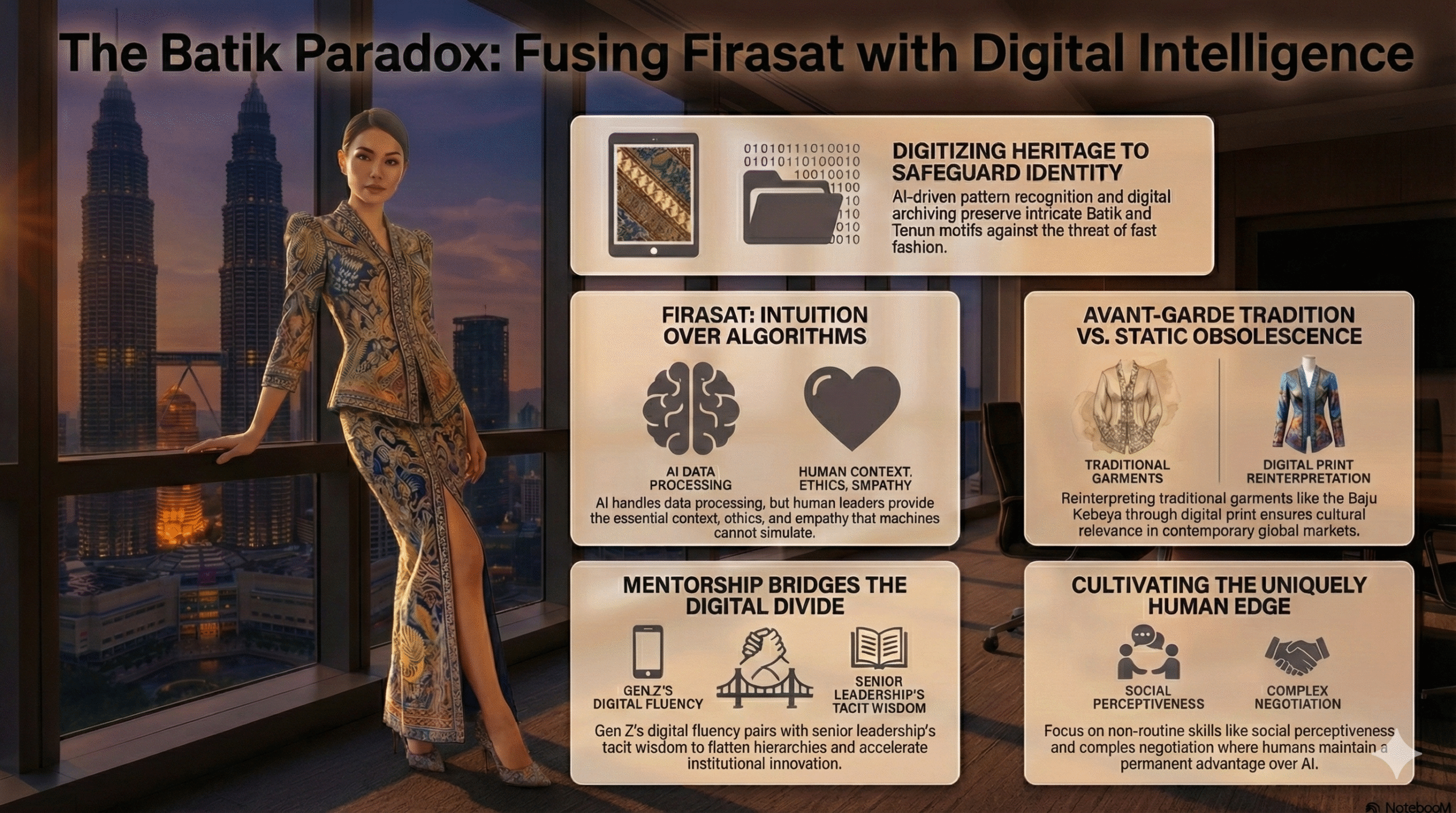

The Batik Analogy: Data vs. Wisdom

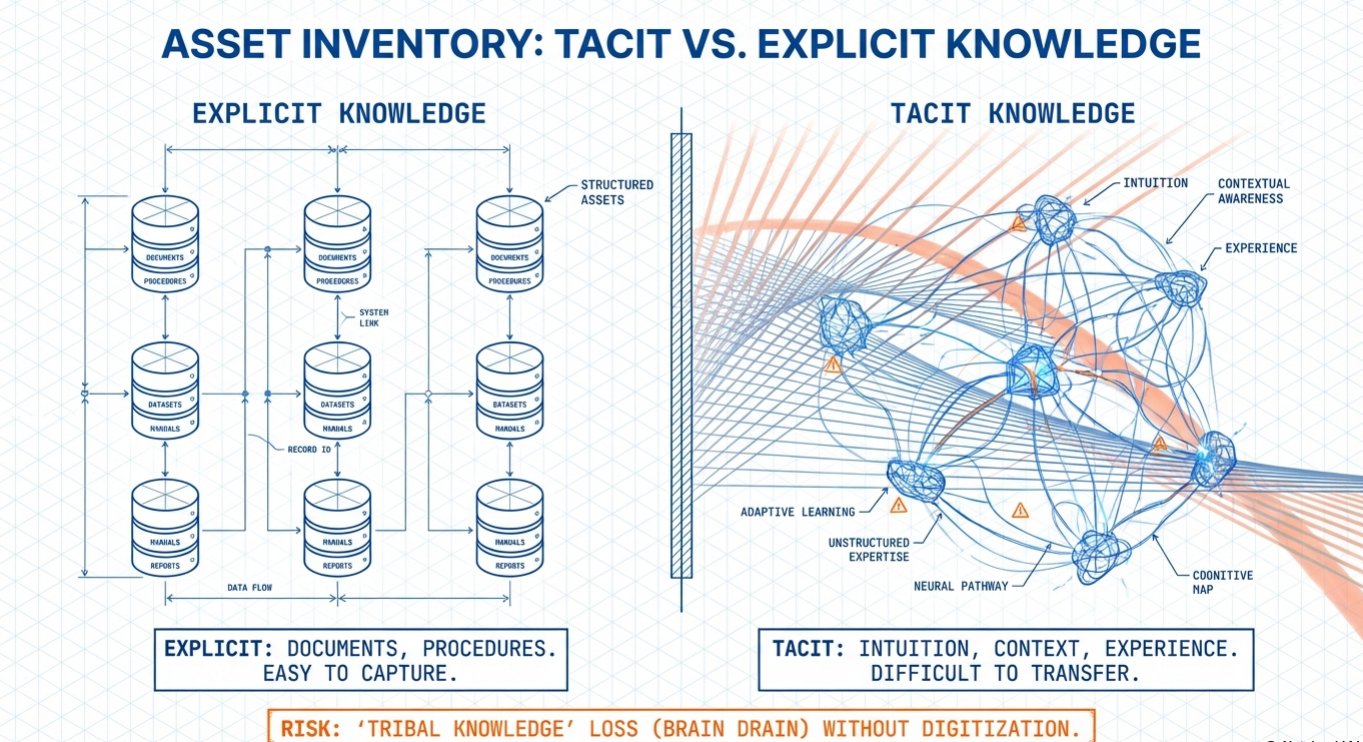

To understand why you are not obsolete, let us look at the difference between two types of knowledge: Explicit and Tacit.

Explicit knowledge is information that can be written down. It is the sales figures, the SOPs, and the technical manuals. AI excels here. It can process millions of data points about textile manufacturing in seconds.

Tacit knowledge is different. It is the know-how residing in your head that is difficult to articulate. It is understanding that the numbers look good, but the client is delaying payment because of an internal family dispute.

It is knowing the specific cultural protocol for a Royal wedding versus a corporate dinner.

Think about Malaysian Batik.

Researchers have found that we can use AI to digitise thousands of Batik motifs. We can generate new patterns endlessly. But the AI cannot replicate the cultural soul of the craft. It does not understand why a specific floral motif is used in a specific region for a specific ceremony. It mimics the form, but it misses the meaning.

In your workplace, you are the Batik master. AI can generate the financial report (Explicit Knowledge). But it cannot tell you that the supplier delayed billing to help the relationship during a tough quarter (Tacit Knowledge). AI sees the data point. You see the relationship.

The more content AI generates, the more valuable your context becomes.

The “Yes Man” Problem: Why AI is a Bad Boss

There is a second, uniquely Malaysian risk we need to address. It involves our respect for hierarchy.

Sociologist Geert Hofstede identified Malaysia as having a very high Power Distance Index. In simple terms, this means our work culture accepts hierarchy. Junior staff are culturally conditioned not to challenge their superiors.

In a traditional setting, if a Director speaks, the Executive listens. The friction arises when we introduce AI into this dynamic.

AI models sound confident. They produce outputs that look professional, authoritative, and “senior.” In the mind of a young executive, the AI can inadvertently become a “Digital Boss.” If ChatGPT says the market trend is X, the junior staff member may hesitate to question it, just as they would hesitate to question you.

This creates a significant risk profile. We risk creating a feedback loop where junior teams blindly accept AI errors because they view the computer as an authority figure.

This is where you become irreplaceable.

AI has no moral compass. It does not understand the delicate politics of a GLC boardroom. It lacks judgement. Your role must shift from doing the work to auditing the work. You are the only one with the rank and the courage to challenge the machine.

In our high-hierarchy culture, we need human leaders to tell their teams: “The AI is not your boss. It is your intern. Check its work.”

The Danger of Silence: “Shadow AI”

While senior management grapples with this identity crisis, your workforce has likely already moved on.

We are seeing a rise in “Shadow AI.” This happens when employees use AI tools secretly because they are afraid to tell you.

Workplace trends suggest that many young workers hide their AI use from their bosses. They worry that if they admit to using AI, you will think they are lazy. They fear the Sifu will scold them for taking a shortcut.

This generational silence is a governance nightmare.

If a junior executive pastes sensitive company data into a public AI model to write a summary, that data may be compromised. But they will not tell you they are doing it. They fear your judgement more than the security risk.

By allowing our own insecurities to make AI a taboo subject, we are driving usage underground.

You must break this silence. You need to say, “I know these tools exist. I am learning them too. Let us figure out how to use them safely.” When you validate the tool, you regain control.

From Oracle to Arbiter

You stop being the “Oracle” who knows all the answers. You become the “Arbiter” who decides what matters.

Your new value lies in three specific areas:

- Contextual Judgement: You know the history of the client and the politics of the stakeholder. You apply this filter to the AI’s raw output. The AI gives you a map, but you decide if the road is safe to drive on.

- Ethical Oversight: You ensure the speed of AI does not compromise the integrity of the firm. You are the human safety brake.

- Mentorship: You must teach the next generation not how to do the task, but how to judge the quality of the task. You are teaching them taste, discernment, and standards.

The invention of the calculator did not replace the accountant. It just stopped the accountant from doing long division on paper. It allowed them to focus on financial strategy. AI is the engine. You are the steering wheel.

Let us stop asking, “What does the machine take away?” and start asking, “What does the machine allow me to focus on?”

The answer is likely the very things that got you promoted in the first place: your judgement, your relationships, and your ability to lead people. The machine cannot take those. It can only make them more important.

If you require further help understanding & applying AI

If you’d like guided practice instead of doing this alone, I coach professionals one on one, especially those pivoting into AI and feeling overwhelmed by the noise. If that’s relevant, send me a short note about your role and what you’re trying to achieve or overcome, and we can work on it.

0 Comments