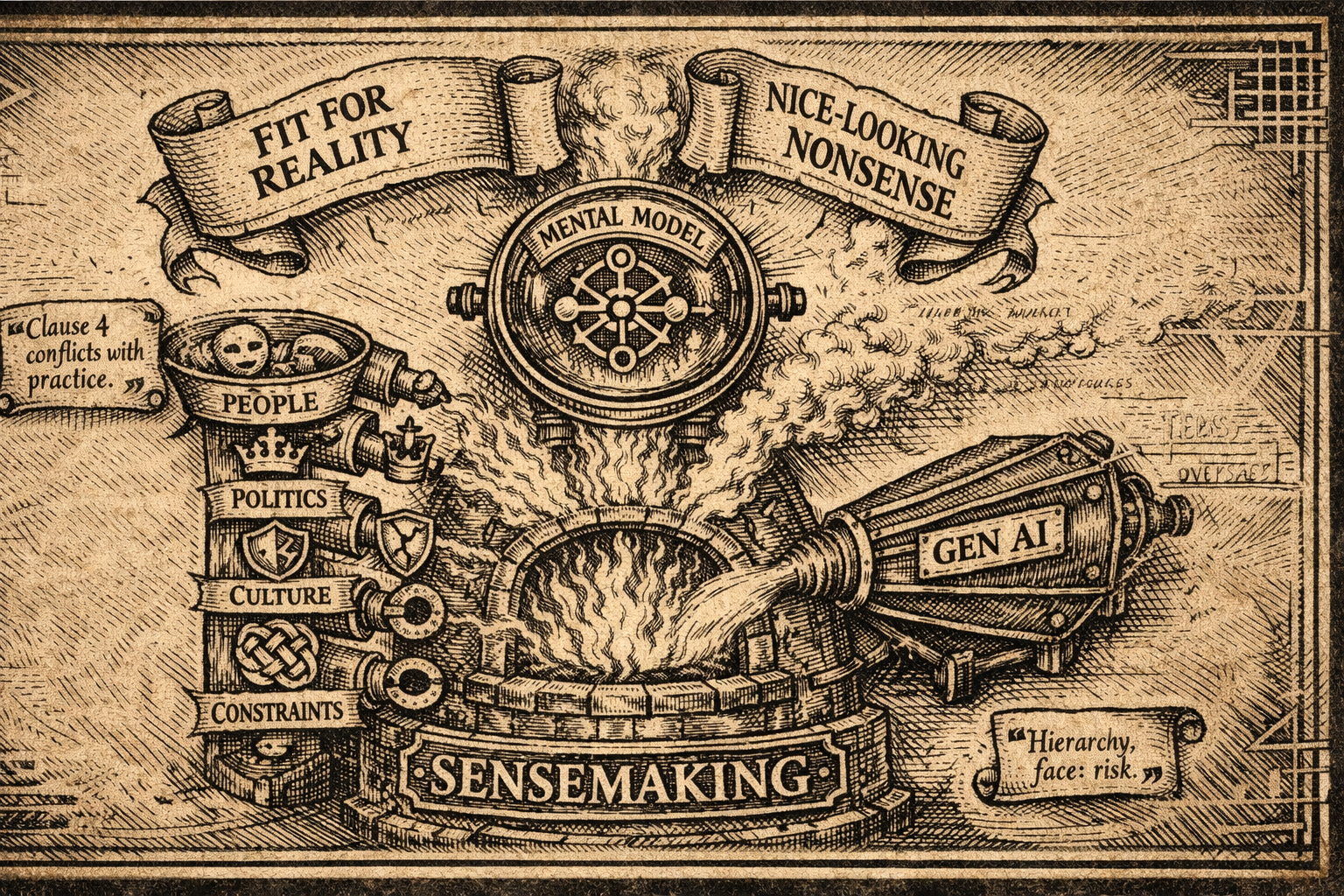

Your Mental Model Is the Real Asset.

Gen AI Is Just the Fast Typist.

Why polished AI drafts can mislead senior leaders, and how to keep sensemaking in your hands.

Hypothetical scenario

WhatsApp, 9:12am (Project Group Chat)

You: “AI drafted the new policy. Looks solid. I’ll table it in the 10am.”

Colleague: “Clause 4 conflicts with our long-standing practice.”

HR: “Tone feels… very overseas. Might trigger pushback.”

Ops: “Also ignores how hierarchy works here. People will lose face.”

This is the trap. When the output is fluent, you instinctively feel: “It understands.” It doesn’t. Let’s use this chat-style lens to spot what’s really happening.

What is changing and why AI matters here

As you become more senior, fewer problems come with clear SOPs. What you’re paid for is sensemaking: reading the room, anticipating second-order effects, balancing politics, reputation, and delivery.

That ability sits in your mental models, your internal map of how your organisation works: who matters, what causes what, what “good” looks like, what risks will be tolerated, and which ones will explode later.

These models are living. You refine them every time a rollout succeeds, a stakeholder blocks, or a “small” wording choice turns into a big issue. If you quietly outsource that sensemaking to AI, you don’t just risk a bad document. You weaken the very capability that makes you valuable.

How Gen AI actually works in this article’s context

WhatsApp decode (what the tool is doing):

AI (implicitly): “I’ve seen many policies. Here’s a plausible one.”

You (mistaken assumption): “Because it sounds coherent, it fits our reality.”

Gen AI is trained on large amounts of text and learns patterns in terms of what typically follows what. When you prompt it, it predicts a likely continuation. It can produce structure, tone, and professional phrasing fast. But it does not hold your organisation’s unwritten rules, cultural expectations, stakeholder histories, or long-term responsibilities in its head.

It generates plausible language, not grounded organisational meaning. That’s why it can sound “smart” and still be misaligned.

What this means for an experienced professional in Malaysia

Three common “looks-right” moments:

1) Policy drafts that ignore local norms

AI can sound firm and modern, but miss union realities, religious practices, caregiving expectations, or leaders who still value physical presence.

2) Org charts that seem politically neutral

AI can propose a neat structure from job descriptions, but won’t know which department feels sidelined, which leader needs a legacy win, or which pairing will quietly derail delivery.

3) Consulting outputs that don’t match the client’s business model

AI can generate frameworks and trends, yet miss cost structure, regional constraints, government relationships, and risk appetite.

In all three, your role is not to “accept” the output. Your role is to test it against your mental model, and update that model where the challenge is useful.

How to start experimenting safely and intelligently

A practical way to work (without surrendering thinking):

- Start with your model (first, in bullets): stakeholders, causal links, priorities/values, constraints/risks.

- Use AI for breadth; you own depth: options, drafts, alternative framings and then you decide what fits.

- Treat outputs as hypotheses: “What assumptions is this making? Where will it clash? Who will push back, and why?”

- Make team mental models explicit: compare models across leaders; use AI to surface gaps, not to “declare” the truth.

- WhatsApp-ready 4-point checklist (before you forward an AI draft):

- What unwritten rule might this violate?

- Which stakeholder reaction is missing here?

- What local wording or tone needs adjustment?

- What second-order effect am I responsible for?

Keep AI as your fast assistant, but keep your mental model in the driver’s seat.

The Interactive Simulator

This page includes a small interactive simulator that complements the article’s core point: Gen AI can produce fluent drafts fast, but fluency is not organisational understanding. The real asset is your mental model: your lived map of stakeholders, norms, constraints, and second order effects.

The simulator makes that visible in minutes. You click a polished paragraph, it reveals the hidden assumptions inside it, then you adjust simple “reality sliders” to match your organisation. As conditions change, you see how downstream effects shift.

Learning intention: turn a readable idea into a repeatable habit: treat AI outputs as hypotheses, stress test them against context, and keep ownership of consequences.

0 Comments